Mohamed Ahmed Hamoda

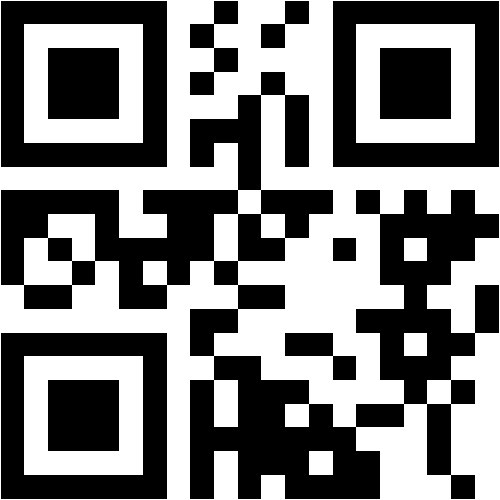

@asmarya.edu.ly

Department of Mathematica / Faculty of Sciences

Alasmarya Islamic University

RESEARCH INTERESTS

Optimization

Scopus Publications

Scholar Citations

Scholar h-index

Scholar i10-index

Scopus Publications

Y Salih, M A Hamoda, Sukono, and M Mamat

IOP Publishing

Mohamed Hamoda, Mustafa Mamat, Mohd Rivaie, and Zabidin Salleh

Hikari, Ltd.

In this paper, a modified conjugate gradient method is presented for solving large-scale unconstrained optimization problems, which possesses the sufficient descent property with Strong Wolfe-Powell line search. A global convergence result was proved when the (SWP) line search was used under some conditions. Computational results for a set consisting of 138 unconstrained optimization test problems showed that this new conjugate gradient algorithm seems to converge more stable and is superior to other similar methods in many situations.

Mohamed Hamoda, Mohd Rivaie, Abdelrhaman Abshar, and Mustafa Mamat

AIP Publishing LLC

Conjugate Gradient methods play an important role in solving unconstrained optimization, especially for large scale problems. In this paper, we compared the performance profile of the classical conjugate gradient coefficients FR, PRP with three new βk. These three new βk possess global convergence properties using the exact line search. Preliminary numerical results show that the three new βk are very promising and efficient when compared to CG coefficients FR, PRP.

Mohamed Hamoda, Mohd Rivaie, Mustafa Mamat, and Zabidin Salleh

Hikari, Ltd.

In this paper, we suggest a new nonlinear conjugate gradient method for solving large scale unconstrained optimization problems. We prove that the new conjugate gradient coefficient k β with exact line search is globally convergent. Preliminary numerical results with a set of 116 unconstrained optimization problems show that k β is very promising and efficient when compared to the other conjugate gradient coefficients Fletcher Reeves ) (FR and Polak -Ribiere – Polyak ) (PRP .

Mohamed Hamoda, Mohd Rivaie, Mustafa Mamat, and Zabidin Salleh

Hikari, Ltd.

In this paper, an efficient nonlinear modified PRP conjugate gradient method is presented for solving large-scale unconstrained optimization problems. The sufficient descent property is satisfied under strong Wolfe-Powell (SWP) line search by restricting the parameter 4 / 1 . The global convergence result is established under the (SWP) line search conditions. Numerical results, for a set consisting of 133 unconstrained optimization test problems, show that this method is better than the PRP method and the FR method.

Mohamed Hamoda, Abdelrhaman Abashar, Mustafa Mamat, and Mohd Rivaie

AIP Publishing LLC

In this paper, we compared the performance profile of the classical conjugate gradient coefficients FR, PRP with two new βk. These two new βk possess global convergence properties using the exact line search. Preliminary numerical results show that, the two new βk is very promising and efficient when compared to CG coefficients FR, PRP.