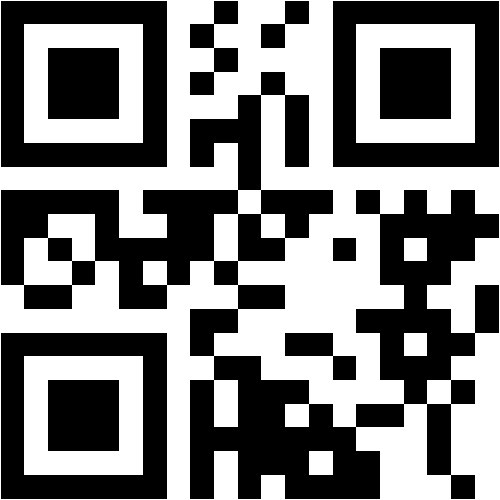

Giovanni Di Liberto

@tcd.ie

School of Computer Science and Statistics

Trinity College Dublin

RESEARCH, TEACHING, or OTHER INTERESTS

Cognitive Neuroscience, Sensory Systems, Linguistics and Language, Artificial Intelligence

Scopus Publications

Scholar Citations

Scholar h-index

Scholar i10-index

Scopus Publications

Giovanni M. Di Liberto, Adam Attaheri, Giorgia Cantisani, Richard B. Reilly, Áine Ní Choisdealbha, Sinead Rocha, Perrine Brusini, and Usha Goswami

Springer Science and Business Media LLC

AbstractEven prior to producing their first words, infants are developing a sophisticated speech processing system, with robust word recognition present by 4–6 months of age. These emergent linguistic skills, observed with behavioural investigations, are likely to rely on increasingly sophisticated neural underpinnings. The infant brain is known to robustly track the speech envelope, however previous cortical tracking studies were unable to demonstrate the presence of phonetic feature encoding. Here we utilise temporal response functions computed from electrophysiological responses to nursery rhymes to investigate the cortical encoding of phonetic features in a longitudinal cohort of infants when aged 4, 7 and 11 months, as well as adults. The analyses reveal an increasingly detailed and acoustically invariant phonetic encoding emerging over the first year of life, providing neurophysiological evidence that the pre-verbal human cortex learns phonetic categories. By contrast, we found no credible evidence for age-related increases in cortical tracking of the acoustic spectrogram.

Sok Hui Jessica Tan, Marina Kalashnikova, Giovanni M. Di Liberto, Michael J. Crosse, and Denis Burnham

MIT Press

Abstract In face-to-face conversations, listeners gather visual speech information from a speaker's talking face that enhances their perception of the incoming auditory speech signal. This auditory–visual (AV) speech benefit is evident even in quiet environments but is stronger in situations that require greater listening effort such as when the speech signal itself deviates from listeners' expectations. One example is infant-directed speech (IDS) presented to adults. IDS has exaggerated acoustic properties that are easily discriminable from adult-directed speech (ADS). Although IDS is a speech register that adults typically use with infants, no previous neurophysiological study has directly examined whether adult listeners process IDS differently from ADS. To address this, the current study simultaneously recorded EEG and eye-tracking data from adult participants as they were presented with auditory-only (AO), visual-only, and AV recordings of IDS and ADS. Eye-tracking data were recorded because looking behavior to the speaker's eyes and mouth modulates the extent of AV speech benefit experienced. Analyses of cortical tracking accuracy revealed that cortical tracking of the speech envelope was significant in AO and AV modalities for IDS and ADS. However, the AV speech benefit [i.e., AV > (A + V)] was only present for IDS trials. Gaze behavior analyses indicated differences in looking behavior during IDS and ADS trials. Surprisingly, looking behavior to the speaker's eyes and mouth was not correlated with cortical tracking accuracy. Additional exploratory analyses indicated that attention to the whole display was negatively correlated with cortical tracking accuracy of AO and visual-only trials in IDS. Our results underscore the nuances involved in the relationship between neurophysiological AV speech benefit and looking behavior.

Anastasia Klimovich-Gray, Giovanni Di Liberto, Lucia Amoruso, Ander Barrena, Eneko Agirre, and Nicola Molinaro

Elsevier BV

Paola Raquel Peña, Philip R Doyle, Emily Yj Ip, Giovanni Di Liberto, Darragh Higgins, Rachel Mcdonnell, Holly Branigan, Joakim Gustafson, Donald Mcmillan, Robert J Moore,et al.

ACM

ACM Reference Format: Paola Raquel Peña Huerta, Philip R. Doyle, Emily Y.J. Ip, Giovanni M. Di Liberto, Darragh Higgins, Rachel McDonnell, Holly Branigan, Joakim Gustafson, DonaldMcMillan, Robert J. Moore, and Benjamin R. Cowan. 2023. A Special Interest Group on Developing Theories of Language Use in Interaction with Conversational User Interfaces. In CHI Conference on Human Factors in Computing Systems Extended Abstracts (CHI ’23 Extended Abstracts). ACM, New York, NY, USA, 4 pages. https://doi.org/10.1145/3544549.3583179

Sara Carta, Anthony M.A. Mangiacotti, Alejandro Lopez Valdes, Richard B. Reilly, Fabia Franco, and Giovanni M. Di Liberto

Elsevier BV

Giorgia Cantisani, Amirhossein Chalehchaleh, Giovanni Di Liberto, and Shihab Shamma

ISCA

Mahmoud Keshavarzi, Giovanni M. Di Liberto, Fiona Gabrielczyk, Angela Wilson, Annabel Macfarlane, and Usha Goswami

Wiley

AbstractThe prevalent "core phonological deficit" model of dyslexia proposes that the reading and spelling difficulties characterizing affected children stem from prior developmental difficulties in processing speech sound structure, for example, perceiving and identifying syllable stress patterns, syllables, rhymes and phonemes. Yet spoken word production appears normal. This suggests an unexpected disconnect between speech input and speech output processes. Here we investigated the output side of this disconnect from a speech rhythm perspective by measuring the speech amplitude envelope (AE) of multisyllabic spoken phrases. The speech AE contains crucial information regarding stress patterns, speech rate, tonal contrasts and intonational information. We created a novel computerized speech copying task in which participants copied aloud familiar spoken targets like “Aladdin.” Seventy‐five children with and without dyslexia were tested, some of whom were also receiving an oral intervention designed to enhance multi‐syllabic processing. Similarity of the child's productions to the target AE was computed using correlation and mutual information metrics. Similarity of pitch contour, another acoustic cue to speech rhythm, was used for control analyses. Children with dyslexia were significantly worse at producing the multi‐syllabic targets as indexed by both similarity metrics for computing the AE. However, children with dyslexia were not different from control children in producing pitch contours. Accordingly, the spoken production of multisyllabic phrases by children with dyslexia is atypical regarding the AE. Children with dyslexia may not appear to listeners to exhibit speech production difficulties because their pitch contours are intact.Research Highlights Speech production of syllable stress patterns is atypical in children with dyslexia. Children with dyslexia are significantly worse at producing the amplitude envelope of multi‐syllabic targets compared to both age‐matched and reading‐level‐matched control children. No group differences were found for pitch contour production between children with dyslexia and age‐matched control children. It may be difficult to detect speech output problems in dyslexia as pitch contours are relatively accurate.

S.H. Jessica Tan, Marina Kalashnikova, Giovanni M. Di Liberto, Michael J. Crosse, and Denis Burnham

Elsevier BV

Adam Attaheri, Dimitris Panayiotou, Alessia Phillips, Áine Ní Choisdealbha, Giovanni M. Di Liberto, Sinead Rocha, Perrine Brusini, Natasha Mead, Sheila Flanagan, Helen Olawole-Scott,et al.

Frontiers Media SA

Here we duplicate a neural tracking paradigm, previously published with infants (aged 4 to 11 months), with adult participants, in order to explore potential developmental similarities and differences in entrainment. Adults listened and watched passively as nursery rhymes were sung or chanted in infant-directed speech. Whole-head EEG (128 channels) was recorded, and cortical tracking of the sung speech in the delta (0.5–4 Hz), theta (4–8 Hz) and alpha (8–12 Hz) frequency bands was computed using linear decoders (multivariate Temporal Response Function models, mTRFs). Phase-amplitude coupling (PAC) was also computed to assess whether delta and theta phases temporally organize higher-frequency amplitudes for adults in the same pattern as found in the infant brain. Similar to previous infant participants, the adults showed significant cortical tracking of the sung speech in both delta and theta bands. However, the frequencies associated with peaks in stimulus-induced spectral power (PSD) in the two populations were different. PAC was also different in the adults compared to the infants. PAC was stronger for theta- versus delta- driven coupling in adults but was equal for delta- versus theta-driven coupling in infants. Adults also showed a stimulus-induced increase in low alpha power that was absent in infants. This may suggest adult recruitment of other cognitive processes, possibly related to comprehension or attention. The comparative data suggest that while infant and adult brains utilize essentially the same cortical mechanisms to track linguistic input, the operation of and interplay between these mechanisms may change with age and language experience.

Giovanni M. Di Liberto, Jens Hjortkjær, and Nima Mesgarani

Frontiers Media SA

Adam Attaheri, Áine Ní Choisdealbha, Giovanni M. Di Liberto, Sinead Rocha, Perrine Brusini, Natasha Mead, Helen Olawole-Scott, Panagiotis Boutris, Samuel Gibbon, Isabel Williams,et al.

Elsevier BV

Giovanni M. Di Liberto, Michele Barsotti, Giovanni Vecchiato, Jonas Ambeck-Madsen, Maria Del Vecchio, Pietro Avanzini, and Luca Ascari

Springer Science and Business Media LLC

AbstractDriving a car requires high cognitive demands, from sustained attention to perception and action planning. Recent research investigated the neural processes reflecting the planning of driving actions, aiming to better understand the factors leading to driving errors and to devise methodologies to anticipate and prevent such errors by monitoring the driver’s cognitive state and intention. While such anticipation was shown for discrete driving actions, such as emergency braking, there is no evidence for robust neural signatures of continuous action planning. This study aims to fill this gap by investigating continuous steering actions during a driving task in a car simulator with multimodal recordings of behavioural and electroencephalography (EEG) signals. System identification is used to assess whether robust neurophysiological signatures emerge before steering actions. Linear decoding models are then used to determine whether such cortical signals can predict continuous steering actions with progressively longer anticipation. Results point to significant EEG signatures of continuous action planning. Such neural signals show consistent dynamics across participants for anticipations up to 1 s, while individual-subject neural activity could reliably decode steering actions and predict future actions for anticipations up to 1.8 s. Finally, we use canonical correlation analysis to attempt disentangling brain and non-brain contributors to the EEG-based decoding. Our results suggest that low-frequency cortical dynamics are involved in the planning of steering actions and that EEG is sensitive to that neural activity. As a result, we propose a framework to investigate anticipatory neural activity in realistic continuous motor tasks.

Michael P. Broderick, Giovanni M. Di Liberto, Andrew J. Anderson, Adrià Rofes, and Edmund C. Lalor

Springer Science and Business Media LLC

AbstractHealthy ageing leads to changes in the brain that impact upon sensory and cognitive processing. It is not fully clear how these changes affect the processing of everyday spoken language. Prediction is thought to play an important role in language comprehension, where information about upcoming words is pre-activated across multiple representational levels. However, evidence from electrophysiology suggests differences in how older and younger adults use context-based predictions, particularly at the level of semantic representation. We investigate these differences during natural speech comprehension by presenting older and younger subjects with continuous, narrative speech while recording their electroencephalogram. We use time-lagged linear regression to test how distinct computational measures of (1) semantic dissimilarity and (2) lexical surprisal are processed in the brains of both groups. Our results reveal dissociable neural correlates of these two measures that suggest differences in how younger and older adults successfully comprehend speech. Specifically, our results suggest that, while younger and older subjects both employ context-based lexical predictions, older subjects are significantly less likely to pre-activate the semantic features relating to upcoming words. Furthermore, across our group of older adults, we show that the weaker the neural signature of this semantic pre-activation mechanism, the lower a subject’s semantic verbal fluency score. We interpret these findings as prediction playing a generally reduced role at a semantic level in the brains of older listeners during speech comprehension and that these changes may be part of an overall strategy to successfully comprehend speech with reduced cognitive resources.

Michael J. Crosse, Nathaniel J. Zuk, Giovanni M. Di Liberto, Aaron R. Nidiffer, Sophie Molholm, and Edmund C. Lalor

Frontiers Media SA

Cognitive neuroscience, in particular research on speech and language, has seen an increase in the use of linear modeling techniques for studying the processing of natural, environmental stimuli. The availability of such computational tools has prompted similar investigations in many clinical domains, facilitating the study of cognitive and sensory deficits under more naturalistic conditions. However, studying clinical (and often highly heterogeneous) cohorts introduces an added layer of complexity to such modeling procedures, potentially leading to instability of such techniques and, as a result, inconsistent findings. Here, we outline some key methodological considerations for applied research, referring to a hypothetical clinical experiment involving speech processing and worked examples of simulated electrophysiological (EEG) data. In particular, we focus on experimental design, data preprocessing, stimulus feature extraction, model design, model training and evaluation, and interpretation of model weights. Throughout the paper, we demonstrate the implementation of each step in MATLAB using the mTRF-Toolbox and discuss how to address issues that could arise in applied research. In doing so, we hope to provide better intuition on these more technical points and provide a resource for applied and clinical researchers investigating sensory and cognitive processing using ecologically rich stimuli.

Giovanni M. Di Liberto, Guilhem Marion, and Shihab A. Shamma

Society for Neuroscience

During music listening, humans routinely acquire the regularities of the acoustic sequences and use them to anticipate and interpret the ongoing melody. Specifically, in line with this predictive framework, it is thought that brain responses during such listening reflect a comparison between the bottom-up sensory responses and top-down prediction signals generated by an internal model that embodies the music exposure and expectations of the listener. To attain a clear view of these predictive responses, previous work has eliminated the sensory inputs by inserting artificial silences (or sound omissions) that leave behind only the corresponding predictions of the thwarted expectations. Here, we demonstrate a new alternate approach in which we decode the predictive electroencephalography (EEG) responses to the silent intervals that are naturally interspersed within the music. We did this as participants (experiment 1, 20 participants, 10 female; experiment 2, 21 participants, 6 female) listened or imagined Bach piano melodies. Prediction signals were quantified and assessed via a computational model of the melodic structure of the music and were shown to exhibit the same response characteristics when measured during listening or imagining. These include an inverted polarity for both silence and imagined responses relative to listening, as well as response magnitude modulations that precisely reflect the expectations of notes and silences in both listening and imagery conditions. These findings therefore provide a unifying view that links results from many previous paradigms, including omission reactions and the expectation modulation of sensory responses, all in the context of naturalistic music listening. SIGNIFICANCE STATEMENT Music perception depends on our ability to learn and detect melodic structures. It has been suggested that our brain does so by actively predicting upcoming music notes, a process inducing instantaneous neural responses as the music confronts these expectations. Here, we studied this prediction process using EEGs recorded while participants listen to and imagine Bach melodies. Specifically, we examined neural signals during the ubiquitous musical pauses (or silent intervals) in a music stream and analyzed them in contrast to the imagery responses. We find that imagined predictive responses are routinely co-opted during ongoing music listening. These conclusions are revealed by a new paradigm using listening and imagery of naturalistic melodies.

Guilhem Marion, Giovanni M. Di Liberto, and Shihab A. Shamma

Society for Neuroscience

Musical imagery is the voluntary internal hearing of music in the mind without the need for physical action or external stimulation. Numerous studies have already revealed brain areas activated during imagery. However, it remains unclear to what extent imagined music responses preserve the detailed temporal dynamics of the acoustic stimulus envelope and, crucially, whether melodic expectations play any role in modulating responses to imagined music, as they prominently do during listening. These modulations are important as they reflect aspects of the human musical experience, such as its acquisition, engagement, and enjoyment. This study explored the nature of these modulations in imagined music based on EEG recordings from 21 professional musicians (6 females and 15 males). Regression analyses were conducted to demonstrate that imagined neural signals can be predicted accurately, similarly to the listening task, and were sufficiently robust to allow for accurate identification of the imagined musical piece from the EEG. In doing so, our results indicate that imagery and listening tasks elicited an overlapping but distinctive topography of neural responses to sound acoustics, which is in line with previous fMRI literature. Melodic expectation, however, evoked very similar frontal spatial activation in both conditions, suggesting that they are supported by the same underlying mechanisms. Finally, neural responses induced by imagery exhibited a specific transformation from the listening condition, which primarily included a relative delay and a polarity inversion of the response. This transformation demonstrates the top-down predictive nature of the expectation mechanisms arising during both listening and imagery. SIGNIFICANCE STATEMENT It is well known that the human brain is activated during musical imagery: the act of voluntarily hearing music in our mind without external stimulation. It is unclear, however, what the temporal dynamics of this activation are, as well as what musical features are precisely encoded in the neural signals. This study uses an experimental paradigm with high temporal precision to record and analyze the cortical activity during musical imagery. This study reveals that neural signals encode music acoustics and melodic expectations during both listening and imagery. Crucially, it is also found that a simple mapping based on a time-shift and a polarity inversion could robustly describe the relationship between listening and imagery signals.

Giovanni M. Di Liberto, Guilhem Marion, and Shihab A. Shamma

Frontiers Media SA

Music perception requires the human brain to process a variety of acoustic and music-related properties. Recent research used encoding models to tease apart and study the various cortical contributors to music perception. To do so, such approaches study temporal response functions that summarise the neural activity over several minutes of data. Here we tested the possibility of assessing the neural processing of individual musical units (bars) with electroencephalography (EEG). We devised a decoding methodology based on a maximum correlation metric across EEG segments (maxCorr) and used it to decode melodies from EEG based on an experiment where professional musicians listened and imagined four Bach melodies multiple times. We demonstrate here that accurate decoding of melodies in single-subjects and at the level of individual musical units is possible, both from EEG signals recorded during listening and imagination. Furthermore, we find that greater decoding accuracies are measured for the maxCorr method than for an envelope reconstruction approach based on backward temporal response functions (bTRFenv). These results indicate that low-frequency neural signals encode information beyond note timing, especially with respect to low-frequency cortical signals below 1 Hz, which are shown to encode pitch-related information. Along with the theoretical implications of these results, we discuss the potential applications of this decoding methodology in the context of novel brain-computer interface solutions.

Aisling E. O'Sullivan, Michael J. Crosse, Giovanni M. Di Liberto, Alain de Cheveigné, and Edmund C. Lalor

Society for Neuroscience

Seeing a speaker's face benefits speech comprehension, especially in challenging listening conditions. This perceptual benefit is thought to stem from the neural integration of visual and auditory speech at multiple stages of processing, whereby movement of a speaker's face provides temporal cues to auditory cortex, and articulatory information from the speaker's mouth can aid recognizing specific linguistic units (e.g., phonemes, syllables). However, it remains unclear how the integration of these cues varies as a function of listening conditions. Here, we sought to provide insight on these questions by examining EEG responses in humans (males and females) to natural audiovisual (AV), audio, and visual speech in quiet and in noise. We represented our speech stimuli in terms of their spectrograms and their phonetic features and then quantified the strength of the encoding of those features in the EEG using canonical correlation analysis (CCA). The encoding of both spectrotemporal and phonetic features was shown to be more robust in AV speech responses than what would have been expected from the summation of the audio and visual speech responses, suggesting that multisensory integration occurs at both spectrotemporal and phonetic stages of speech processing. We also found evidence to suggest that the integration effects may change with listening conditions; however, this was an exploratory analysis and future work will be required to examine this effect using a within-subject design. These findings demonstrate that integration of audio and visual speech occurs at multiple stages along the speech processing hierarchy. SIGNIFICANCE STATEMENT During conversation, visual cues impact our perception of speech. Integration of auditory and visual speech is thought to occur at multiple stages of speech processing and vary flexibly depending on the listening conditions. Here, we examine audiovisual (AV) integration at two stages of speech processing using the speech spectrogram and a phonetic representation, and test how AV integration adapts to degraded listening conditions. We find significant integration at both of these stages regardless of listening conditions. These findings reveal neural indices of multisensory interactions at different stages of processing and provide support for the multistage integration framework.

Giovanni M Di Liberto, Claire Pelofi, Roberta Bianco, Prachi Patel, Ashesh D Mehta, Jose L Herrero, Alain de Cheveigné, Shihab Shamma, and Nima Mesgarani

eLife Sciences Publications, Ltd

Humans engagement in music rests on underlying elements such as the listeners’ cultural background and interest in music. These factors modulate how listeners anticipate musical events, a process inducing instantaneous neural responses as the music confronts these expectations. Measuring such neural correlates would represent a direct window into high-level brain processing. Here we recorded cortical signals as participants listened to Bach melodies. We assessed the relative contributions of acoustic versus melodic components of the music to the neural signal. Melodic features included information on pitch progressions and their tempo, which were extracted from a predictive model of musical structure based on Markov chains. We related the music to brain activity with temporal response functions demonstrating, for the first time, distinct cortical encoding of pitch and note-onset expectations during naturalistic music listening. This encoding was most pronounced at response latencies up to 350 ms, and in both planum temporale and Heschl’s gyrus.

Giovanni M. Di Liberto, Claire Pelofi, Shihab Shamma, and Alain de Cheveigné

Acoustical Society of Japan

Giovanni M. Di Liberto , Claire Pelofi, Shihab Shamma and Alain de Cheveigné Laboratoire des Systèmes Perceptifs, UMR 8248, CNRS, France. Ecole Normale Supérieure, PSL University, France Institut de Neurosciences des Système, UMR S 1106, INSERM, Aix Marseille Université, France Institute for Systems Research, Electrical and Computer Engineering, University of Maryland, College Park, USA UCL Ear Institute, London, United Kingdom

Giovanni M. Di Liberto, Daniel Wong, Gerda Ana Melnik, and Alain de Cheveigné

Elsevier BV

Alain de Cheveigné, Giovanni M. Di Liberto, Dorothée Arzounian, Daniel D.E. Wong, Jens Hjortkjær, Søren Fuglsang, and Lucas C. Parra

Elsevier BV

Marina Kalashnikova, Varghese Peter, Giovanni M. Di Liberto, Edmund C. Lalor, and Denis Burnham

Springer Science and Business Media LLC

AbstractThis study assessed cortical tracking of temporal information in incoming natural speech in seven-month-old infants. Cortical tracking refers to the process by which neural activity follows the dynamic patterns of the speech input. In adults, it has been shown to involve attentional mechanisms and to facilitate effective speech encoding. However, in infants, cortical tracking or its effects on speech processing have not been investigated. This study measured cortical tracking of speech in infants and, given the involvement of attentional mechanisms in this process, cortical tracking of both infant-directed speech (IDS), which is highly attractive to infants, and the less captivating adult-directed speech (ADS), were compared. IDS is the speech register parents use when addressing young infants. In comparison to ADS, it is characterised by several acoustic qualities that capture infants’ attention to linguistic input and assist language learning. Seven-month-old infants’ cortical responses were recorded via electroencephalography as they listened to IDS or ADS recordings. Results showed stronger low-frequency cortical tracking of the speech envelope in IDS than in ADS. This suggests that IDS has a privileged status in facilitating successful cortical tracking of incoming speech which may, in turn, augment infants’ early speech processing and even later language development.

Giovanni M. Di Liberto, Varghese Peter, Marina Kalashnikova, Usha Goswami, Denis Burnham, and Edmund C. Lalor

Elsevier BV

Alain de Cheveigné, Daniel D.E. Wong, Giovanni M. Di Liberto, Jens Hjortkjær, Malcolm Slaney, and Edmund Lalor

Elsevier BV