AMARNATH PATHAK

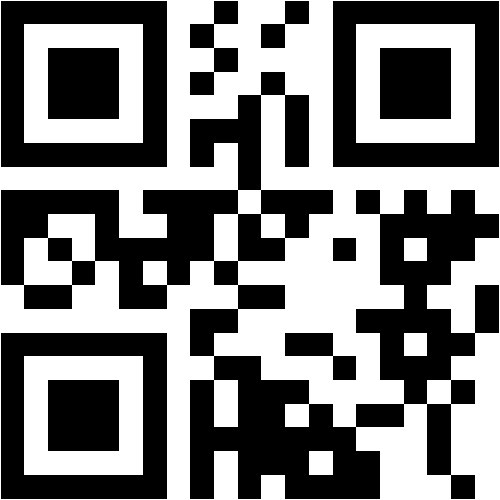

@nitmz.ac.in

PGT Computer Science

Kendriya Vidyalaya Khairagarh

EDUCATION

PhD NIT Mizoram

13

Scopus Publications

251

Scholar Citations

8

Scholar h-index

8

Scholar i10-index

Scopus Publications

- Recognising formula entailment using long short-term memory network

Amarnath Pathak and Partha Pakray

SAGE Publications

The article presents an approach to recognise formula entailment, which concerns finding entailment relationships between pairs of math formulae. As the current formula-similarity-detection approaches fail to account for broader relationships between pairs of math formulae, recognising formula entailment becomes paramount. To this end, a long short-term memory (LSTM) neural network using symbol-by-symbol attention for recognising formula entailment is implemented. However, owing to the unavailability of relevant training and validation corpora, the first and foremost step is to create a sufficiently large-sized symbol-level MATHENTAIL data set in an automated fashion. Depending on the extent of similarity between the corresponding symbol embeddings, the symbol pairs in the MATHENTAIL data set are assigned ‘entailment’ or ‘neutral’ labels. An improved symbol-to-vector (isymbol2vec) method generates mathematical symbols (in LATEX) and their embeddings using the Wikipedia corpus of scientific documents and Continuous Bag of Words (CBOW) architecture. Eventually, the LSTM network, trained and validated using the MATHENTAIL data set, predicts formulae entailment for test formulae pairs with a reasonable accuracy of 62.2%. - MathIRs: A One-Stop Solution to Several Mathematical Information Retrieval Needs

Amarnath Pathak, Partha Pakray, and Ranjita Das

Springer Nature Singapore - Scientific Text Entailment and a Textual-Entailment-based framework for cooking domain question answering

Amarnath Pathak, Riyanka Manna, Partha Pakray, Dipankar Das, Alexander Gelbukh, and Sivaji Bandyopadhyay

Springer Science and Business Media LLC - English–Mizo Machine Translation using neural and statistical approaches

Amarnath Pathak, Partha Pakray, and Jereemi Bentham

Springer Science and Business Media LLC - Neural machine translation for Indian languages

Amarnath Pathak and Partha Pakray

Walter de Gruyter GmbH

Abstract Machine Translation bridges communication barriers and eases interaction among people having different linguistic backgrounds. Machine Translation mechanisms exploit a range of techniques and linguistic resources for translation prediction. Neural machine translation (NMT), in particular, seeks optimality in translation through training of neural network, using a parallel corpus having a considerable number of instances in the form of a parallel running source and target sentences. Easy availability of parallel corpora for major Indian language forms and the ability of NMT systems to better analyze context and produce fluent translation make NMT a prominent choice for the translation of Indian languages. We have trained, tested, and analyzed NMT systems for English to Tamil, English to Hindi, and English to Punjabi translations. Predicted translations have been evaluated using Bilingual Evaluation Understudy and by human evaluators to assess the quality of translation in terms of its adequacy, fluency, and correspondence with human-predicted translation. - LSTM neural network based math information retrieval

Amarnath Pathak, Partha Pakray, and Ranjita Das

IEEE

The work presented in this paper ascertains role of Long Sort-Term Memory (LSTM) neural network in Math Information Retrieval (MIR). Motivated from promising performances of the LSTM for sequence-to-sequence tasks, an LSTM based Formula Entailment (LFE) module is implemented for recognizing entailment between mathematical user query and document formulae. The LFE module is trained and validated using a symbol level Math Formula Entailment (MENTAIL) dataset. The relevance of a document is determined by the fraction of document formulae which entail the user query. A reasonable score of 0.45 for the P_5 evaluation measure substantiates competence of the implemented MIR system in retrieving relevant documents corresponding to a mathematical user query. - Extracting context of math formulae contained inside scientific documents

Amarnath Pathak, Ranjita Das, Partha Pakray, and Alexander Gelbukh

Instituto Politecnico Nacional/Centro de Investigacion en Computacion

A math formula present inside a scientific document is often preceded by its textual description, which is commonly referred to as the context of formula. Annotating context to the formula enriches its semantics, and consequently impacts the retrieval of mathematical contents from scientific documents. Also, with a considerable surety, a context can be assumed to be one of the Noun Phrases (NPs) of the sentence in which formula occurs. However, the presence of several different misleading NPs in the sentence necessitates extraction of an NP, which is more precise to the formula than the rest. Although a fair number of methods are developed for precise context extraction, it can be fascinating to prospect other competent techniques which can further their performances. To this end, this paper discusses implementation of an automated context extraction system, which follows certain heuristics in assigning weights to different candidate NPs, and tune those weights using a development set comprising annotated formulae. The implemented system significantly outperforms nearest noun and sentence–pattern based methods on the ground of F–score. - Binary vector transformation of math formula for mathematical information retrieval

Amarnath Pathak, Partha Pakray, and Alexander Gelbukh

IOS Press - Mining Fuzzy Classification Rules with Exceptions: A Comparative Study

Amarnath Pathak, Dhruv Goel, and Somen Debnath

Springer Singapore - A formula embedding approach to math information retrieval

Amarnath Pathak, Partha Pakray, and Alexander Gelbukh

Instituto Politecnico Nacional/Centro de Investigacion en Computacion

Intricate math formulae, which majorly constitute the content of scientific documents, add to the complexity of scientific document retrieval. Although modifications in conventional indexing and search mechanisms have eased the complexity and exhibited notable performance, the formula embedding approach to scientific document retrieval sounds equally appealing and promising. Formula Embedding Module of the proposed system uses a Bit Position Information Table to transform math formulae, contained inside scientific documents, into binary formulae vectors. Each set bit of a formula vector designates presence of aspecific mathematical entity. Mathematical user query is transformed into query vector, in similar fashion, and the corresponding relevant documents are retrieved. Relevance of a search result is characterized by extent of similarity between the indexed formula vector and the query vector. Promising performance, under moderately constrained situation, substantiates competence of the proposed approach. - An HMM Based POS Tagger for POS Tagging of Code-Mixed Indian Social Media Text

Partha Pakray, Goutam Majumder, and Amarnath Pathak

Springer Singapore - An Improved and Intelligent Boolean Model for Scientific Text Information Retrieval

Amarnath Pathak and Partha Pakray

Springer Singapore - MathIRs: Retrieval system for scientific documents

Amarnath Pathak, Partha Pakray, Sandip Sarkar, Dipankar Das, and Alexander Gelbukh

Instituto Politecnico Nacional/Centro de Investigacion en Computacion

Effective retrieval of mathematical contents from vast corpus of scientific documents demands enhancement in the conventional indexing and searching mechanisms. Indexing mechanism and the choice of semantic similarity measures guide the results of Math Information Retrieval system (MathIRs) to perfection. Tokenization and formula unification are among the distinguishing i features of indexing mechanism, used in MathIRs, which facilitate sub-formula and similarity search. Besides, the scientific documents and the user queries in MathIRs will contain math as well as text contents and to match these contents we require three important modules: Text-Text Similarity (TS), Math-Math Similarity (MS) and Text-Math Similarity (TMS). In this paper we have proposed MathIRs comprising these important modules and a substitution tree based mechanism for indexing mathematical expressions. We have also presented experimental results for similarity search and argued that proposal of MathIRs will ease the task of scientific document retrieval.

RECENT SCHOLAR PUBLICATIONS

- Recognising formula entailment using long short-term memory network

A Pathak, P Pakray

Journal of Information Science, 01655515231184826 2023 - MathIRs: A One-Stop Solution to Several Mathematical Information Retrieval Needs

A Pathak, P Pakray, R Das

Proceedings of International Conference on Frontiers in Computing and 2022 - Scientific text entailment and a textual-entailment-based framework for cooking domain question answering

A Pathak, R Manna, P Pakray, D Das, A Gelbukh, S Bandyopadhyay

Sādhanā 46 (1), 24 2021 - Context guided retrieval of math formulae from scientific documents

A Pathak, P Pakray, R Das

Journal of Information and Optimization Sciences 40 (8), 1559-1574 2019 - English–Mizo machine translation using neural and statistical approaches

A Pathak, P Pakray, J Bentham

Neural Computing and Applications 31 (11), 7615-7631 2019 - Extracting context of math formulae contained inside scientific documents

A Pathak, R Das, P Pakray, A Gelbukh

Computacin y Sistemas 23 (3), 803-818 2019 - Binary vector transformation of math formula for mathematical information retrieval

A Pathak, P Pakray, A Gelbukh

Journal of Intelligent & Fuzzy Systems, 1-11 2019 - LSTM neural network based math information retrieval

A Pathak, P Pakray, R Das

2019 Second International Conference on Advanced Computational and 2019 - A formula embedding approach to math information retrieval

A Pathak, P Pakray, A Gelbukh

Computacin y Sistemas 22 (3), 819-833 2018 - An Improved and Intelligent Boolean Model for Scientific Text Information Retrieval

A Pathak, P Pakray

Communications in Computer and Information Science (CCIS), Springer 836, 465-476 2018 - An HMM Based POS Tagger for POS Tagging of Code-Mixed Indian Social Media Text

P Pakray, G Majumder, A Pathak

Communications in Computer and Information Science (CCIS), Springer 836, 495-504 2018 - Neural Machine Translation for Indian Languages

A Pathak, P Pakray

Journal of Intelligent Systems 2018 - Mining Fuzzy Classification Rules with Exceptions: A Comparative Study

A Pathak, D Goel, S Debnath

Proceedings of the International Conference on Computing and Communication 2018 - Exception discovery using ant colony optimisation

S Ratnoo, A Pathak, J Ahuja, J Vashishtha

International Journal of Computational Systems Engineering 4 (1), 46-57 2018 - A STUDY ON MINING FUZZY CLASSIFICATION RULES WITH EXCEPTIONS

S Debnath, A Pathak

2018 - Mathirs: Retrieval system for scientific documents

A Pathak, P Pakray, S Sarkar, D Das, A Gelbukh

Computacin y Sistemas 21 (2), 253-265 2017 - Classification rule and exception mining using nature inspired algorithms

A Pathak, J Vashistha

International Journal of Computer Science and Information Technologies 6 (3 2015

MOST CITED SCHOLAR PUBLICATIONS

- English–Mizo machine translation using neural and statistical approaches

A Pathak, P Pakray, J Bentham

Neural Computing and Applications 31 (11), 7615-7631 2019

Citations: 70 - Neural Machine Translation for Indian Languages

A Pathak, P Pakray

Journal of Intelligent Systems 2018

Citations: 57 - LSTM neural network based math information retrieval

A Pathak, P Pakray, R Das

2019 Second International Conference on Advanced Computational and 2019

Citations: 22 - A formula embedding approach to math information retrieval

A Pathak, P Pakray, A Gelbukh

Computacin y Sistemas 22 (3), 819-833 2018

Citations: 19 - Mathirs: Retrieval system for scientific documents

A Pathak, P Pakray, S Sarkar, D Das, A Gelbukh

Computacin y Sistemas 21 (2), 253-265 2017

Citations: 19 - Binary vector transformation of math formula for mathematical information retrieval

A Pathak, P Pakray, A Gelbukh

Journal of Intelligent & Fuzzy Systems, 1-11 2019

Citations: 15 - Classification rule and exception mining using nature inspired algorithms

A Pathak, J Vashistha

International Journal of Computer Science and Information Technologies 6 (3 2015

Citations: 13 - Scientific text entailment and a textual-entailment-based framework for cooking domain question answering

A Pathak, R Manna, P Pakray, D Das, A Gelbukh, S Bandyopadhyay

Sādhanā 46 (1), 24 2021

Citations: 11 - Context guided retrieval of math formulae from scientific documents

A Pathak, P Pakray, R Das

Journal of Information and Optimization Sciences 40 (8), 1559-1574 2019

Citations: 8 - An HMM Based POS Tagger for POS Tagging of Code-Mixed Indian Social Media Text

P Pakray, G Majumder, A Pathak

Communications in Computer and Information Science (CCIS), Springer 836, 495-504 2018

Citations: 7 - Exception discovery using ant colony optimisation

S Ratnoo, A Pathak, J Ahuja, J Vashishtha

International Journal of Computational Systems Engineering 4 (1), 46-57 2018

Citations: 4 - Extracting context of math formulae contained inside scientific documents

A Pathak, R Das, P Pakray, A Gelbukh

Computacin y Sistemas 23 (3), 803-818 2019

Citations: 3 - An Improved and Intelligent Boolean Model for Scientific Text Information Retrieval

A Pathak, P Pakray

Communications in Computer and Information Science (CCIS), Springer 836, 465-476 2018

Citations: 3